Down the systems thinking rabbit hole!

Or, applying systems thinking to the BA role itself

Okay, so I’m deep down a rabbit hole. A BA existential crisis. 🕳️

On the back of writing about team information flows, I realised that I had done a lot of mulling on the thing business analysts do, the parts and components, but little real thinking about the system business analysts work within. This realisation meant that I’ve spent the last month in a mad panic where I’ve simultaneously tried to teach myself systems thinking, and also to apply systems thinking to the BA role.

I’m not entirely sure it worked. But at the very least, I learned a bunch.

Which I know is not a strong sell to read this article. But if you’re thinking that you don’t want to go among mad people—me in this scenario—let me remind you what the cat in Alice in Wonderland said:

“Oh, you can’t help that,” said the Cat: “we’re all mad here. I’m mad. You’re mad.”

“How do you know I’m mad?” said Alice.

“You must be,” said the Cat, “or you wouldn’t have come here.”

And on that note: systems thinking. But first TL;DR:

⮑ Systems thinking is a logic and holistic analysis approach

⮑ Theory of constraints is a systems thinking approach

⮑ Current reality trees (CRT) help to identify root causes

⮑ Using CRT on the BA role shows how we support the system

⮑ Poor business analysis has massive negative impacts

⮑ Good business analysis has huge positive impact

⮑ If we focus on the system, we can drive a bigger impact!

An intro to systems thinking

Note: Feel free to skip the context setting if you’re familiar with systems thinking, theory of constraints, and current reality trees!

Like most BAs or thinkers of any kind, when I first came across systems thinking, I had a “yeah, of course” reaction. Systems thinking is a way of making sense of the complexity of reality by looking at it in terms of wholes and relationships, rather than by breaking it down into its parts.

In short, systems thinking is an approach that focuses on the interplay between things, not just the things themselves. You know, understand the context before messing with things… blah blah blah… all very natural BA stuff.

A system is never the sum of its parts; it’s the product of their interaction.

But bear with me because we’re not down the rabbit hole quite yet. 🐇

Theory of constaints

Within the umbrella of ‘systems thinking’, there’s a management theory called Theory of Constraints (TOC) created by Dr. Eliyahu Goldratt. The central idea of TOC is that every system has constraints, and focusing on reducing the impact of a constraint is the most effective way to improve the system.

And like all (read: none) great thinkers, he chose to explain his ideas as a novel. No, I am not at all joking, and boy was I surprised when what I thought was the fictional introduction added for engagement purposes just kept on going and going … for an entire book.

Luckily, it is not the only method he used to communicate his ideas, and there are plenty of books, videos, and articles on his theories. Phew!

Within TOC there are five logical tools: 🍂 Current reality tree, 🌳 Future reality tree, 🌿 Prerequisite Tree, 🌱 Transition Tree, and the 🌩️ Evaporating cloud.

[Side note: Best named logical tools, no?]

For this article, I’ve focused primarily on the Current Reality Tree (CRT) as I was mostly interested in understanding how BAs affect the system.

The realities of a Reality Tree

A Reality Tree (RT) is, at heart, a cause-and-effect diagram. It maps the relationships between undesirable symptoms to identify root causes. Of course, there’s a bunch more to constructing a good Reality Tree—including logical tests up the wazoo—but at heart: they are a cause-and-effect diagram.

Like other root cause analysis tools, Current Reality Trees are really powerful. By being explicit about what is being observed in the system, you are forced to re-examine what is happening and the assumptions you are making. And the Theory of Constaints believes that by identifying the root causes, you can focus attention on resolving the correct problem, and receiving the maximum benefit for your efforts.

Which all sounds great!

But mapping reality is not simple, because reality isn’t simple as business analysts already know. This is why context diagrams are considered a recommended — if not required — step when establishing a project.

But compared to the logical foundations of a reality tree, context diagrams feel like child’s play.

The TOC thinking process is composed of logical tools. The emphasis here is on the word “logic” for good reason. A lot of problem-analysis tools use graphical representations. Flowcharts, “fishbone” diagrams, and tree and affinity diagrams are typical examples. But none of these diagrams are, strictly speaking, logic tools, because they don’t incorporate any rigorous criteria for validating the connections between one element and another. In most cases, they’re somebody’s perception of the relationship.

Challenge accepted. 👏

How to read the (reali-tea) leaves?

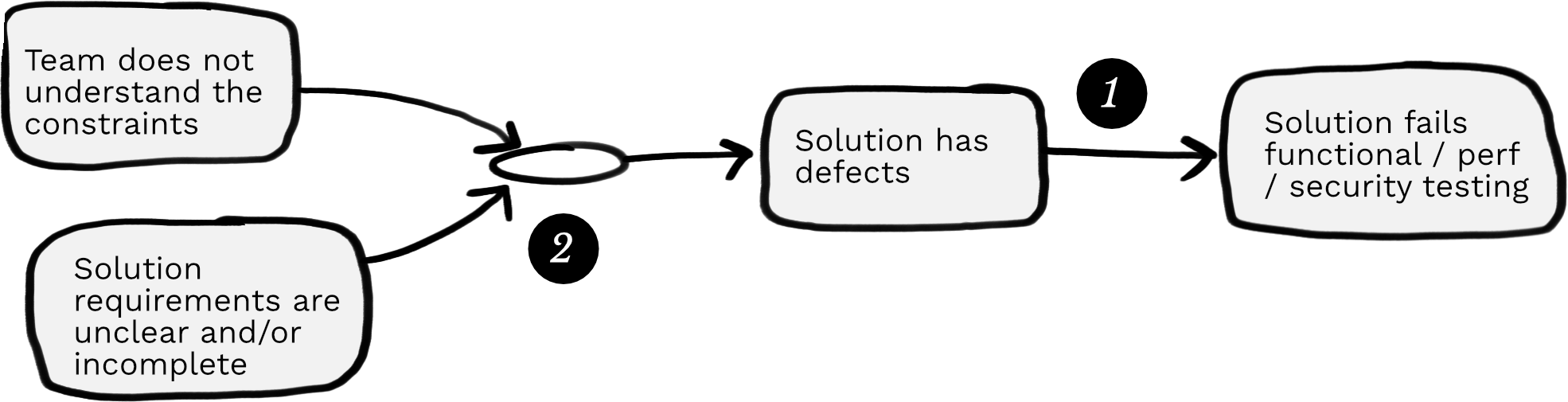

Once you get the hang of them, reality trees are relatively easy to read. Boxes are statements about an observable effect, and the arrows show the causal relationship between two effects. An effect without a cause is a root cause.

To read, refer to boxes as ”IF”, arrows as ”THEN” and circles as ”AND”. Some examples:

❶ IF “Solution has defects”, THEN “Solution fails functional / perf / security testing”.

❷ IF “Team does not understand the constraints” AND “Solution requirements are unclear and/or incomplete”, THEN “Solution has defects”.

Okay, enough context ...

Let’s get back to the actual point.

The reason I was down this systems thinking causal map rabbit hole is that I wanted to understand how BAs influence the systems within which we operate. And I wanted to do that in a way that I could reason about.

It is all well and good to claim that we’re instrumental, but proving it would be better, no?

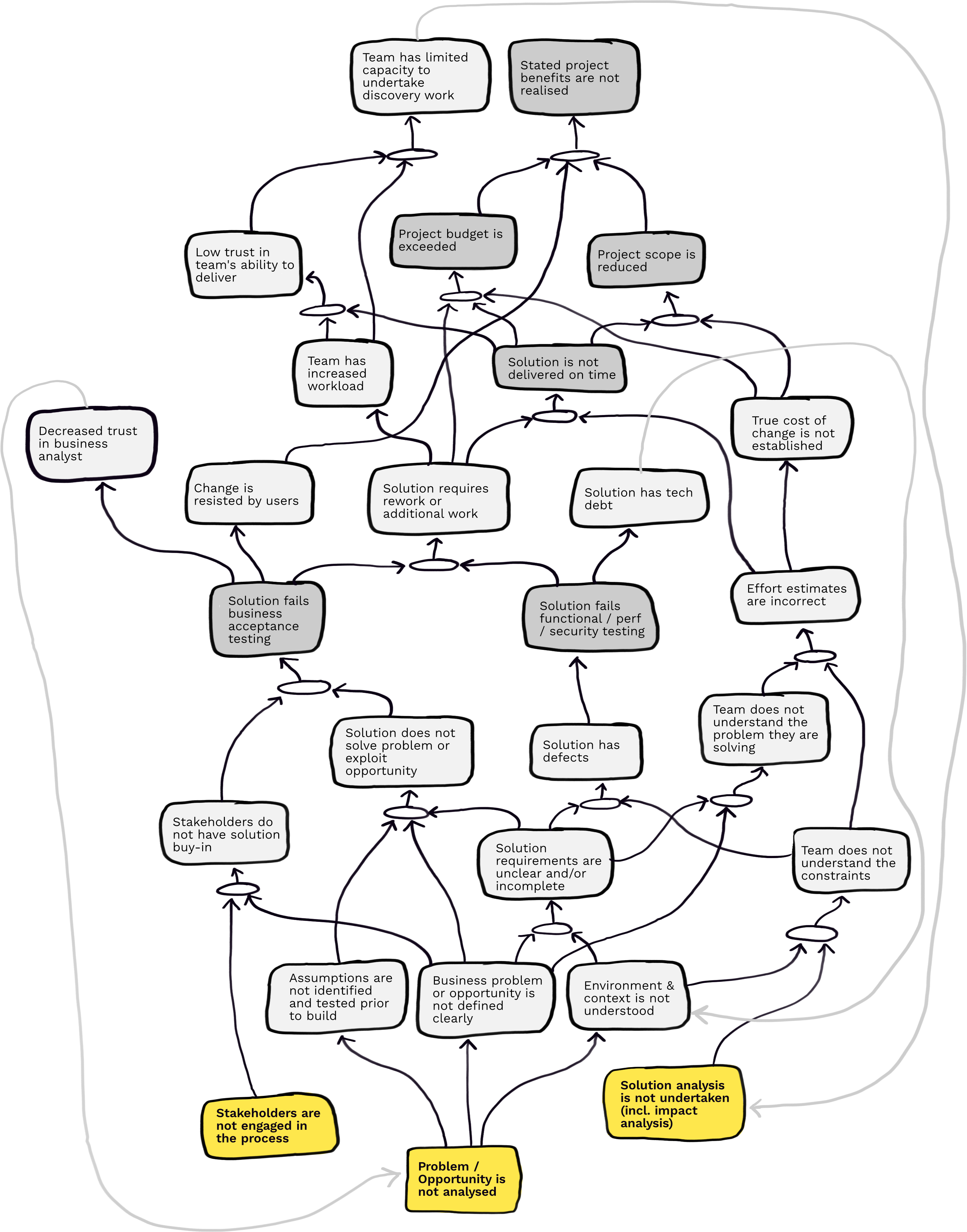

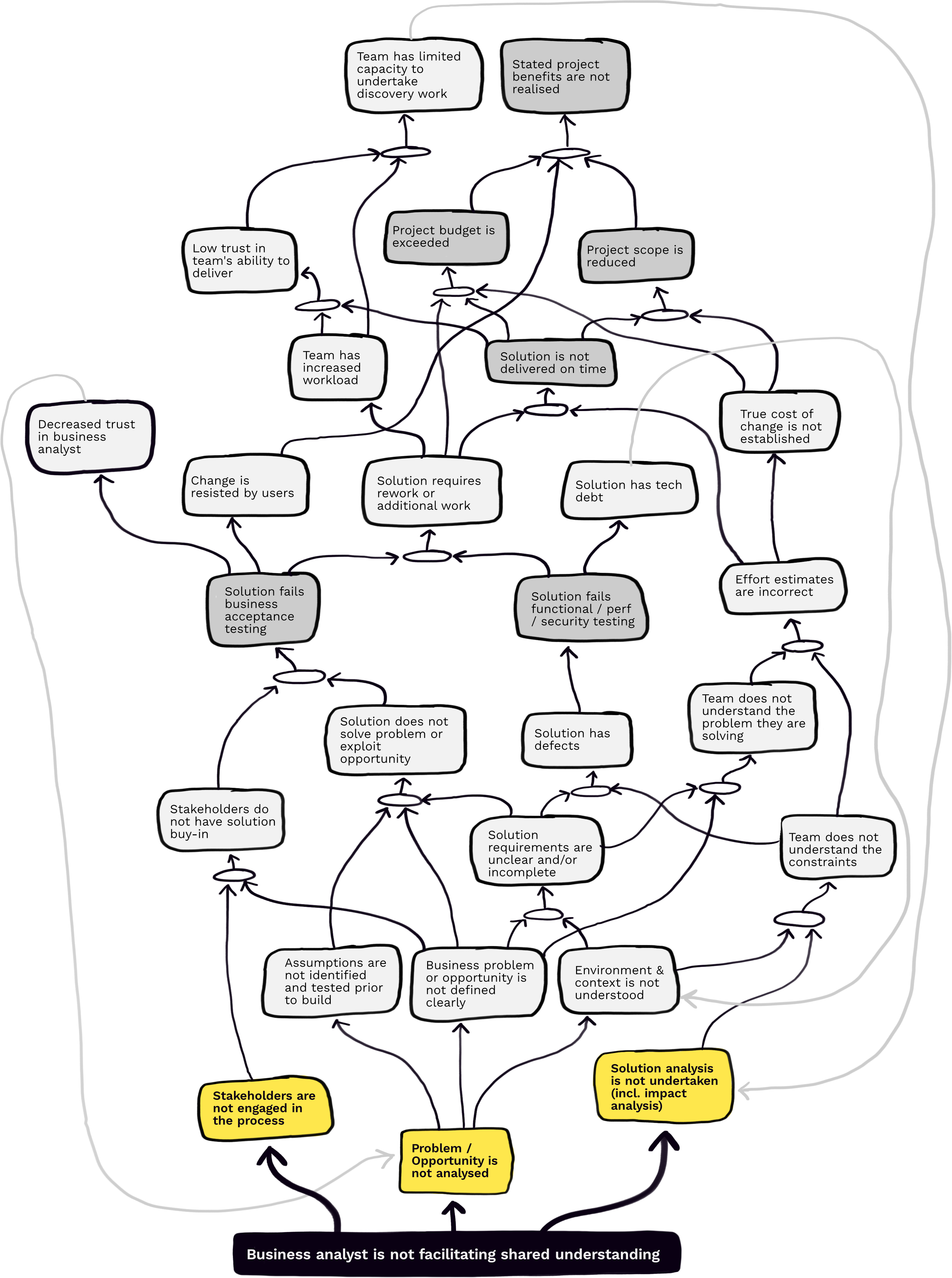

So, I created a (hypothetical) Current Reality Tree for all the things that go wrong with business analysis work based on my experience. Call it a mash up of the bad things that I’ve seen more than once.

Now it is important to note up front that I am wildly self taught in this method! 😅

And perhaps even more importantly, this is a hack. The CRT approach is designed to surface how the different parts of a real system work together, and here I’m using it to understand how things can go wrong. So I’ve included more unhappy stuff than you’d usually include (here’s hoping).

As a result, many of the “ANDs” I would read as “AND/ORs” because it is less likely that both will occur in the real world, but either would contribute to the unintended consequence. For example, both “Solution requires rework” OR “Effort estimates are incorrect” can result in “Solution not delivered on time”. Or both, of course.

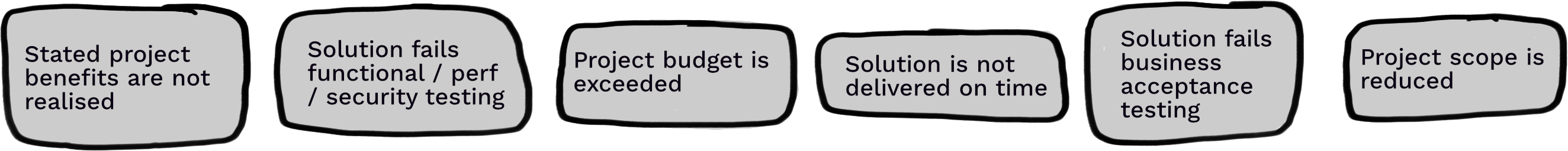

And for the map, I started with the following six big undesirable effects:

But with all those disclaimers out of the way, here’s the whole map. I don’t think it is too overly dramatic to say:

Welcome to BA hell!🔥

My six (!) undesired effects turned into this whole mess. 👇 Wow.

This is definitely complicated, wouldn’t you agree? ☝️

But what’s driving this complexity?

I traced almost everything back to three root causes for all the issues:

❶ Stakeholders not engaged in the process

❷ Problem or opportunity is not analysed

❸ Solution analysis not undertaken

But let's push that further ... what causes this?

Yulia Kosarenko claims that the most important thing business analysts do is build shared understanding of the business requirements across all stakeholders.

Yip. Us.

Business analysts have an outsized impact on the entire delivery system. We are kinda important. 👑

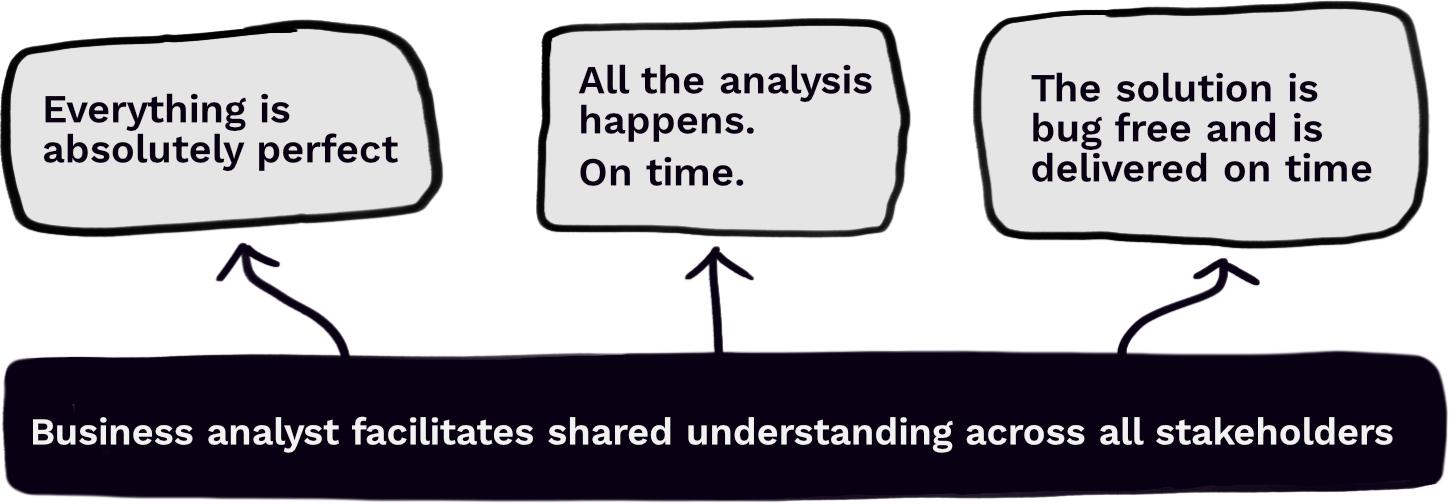

I don’t want to Han-splain™️ to you how to tackle these root causes. Especially since any advice would have to be contextual to be of any value to you. But I do want to show you what this map looks like when you’re BAwesome™️.

Welcome to BA heaven!

The following is the Future Reality Tree (FRT) for when you build shared understanding.

I mean, I’m obviously joking.

The truth is that business analysts operate in a complex system where every good outcome is predicated on many other things also going right (good development, good testing, and good everything). Doing our job and doing it well is necessary, but, sadly, insuffcient to delivering value.

Yeah, okay, cool. So we're great ... so what?

Okay okay okay, so what does this all mean?

Simple: We are too easily distracted from the what by the how.

We get obsessed with techniques, and we forget that the requirements don’t matter, but the shared understanding of the problem, scope, and options do. And equally, the stakeholder map doesn’t matter, but the relationships do. The as-built document format doesn’t matter, but the IP it represents does.

Now I’m as bad as any BA. I have my preferred approaches to all of the above.

But I shouldn’t.

First, I should ask why.

Then what.

And only then, how.

Because the performance of a system depends on how the parts work together, not how they act independently.

If we only focus on the artefacts that we create, and ignore the system in which we work, we might not see the impact we’re having. But to avoid that, we first need to see the system. Only then can we focus on the right things.

And that’s where system thinking can help.

The end. But feedback is welcome!

Please do hit me up on Linkedin or by email if you have any feedback! I’m always up for random questions, and I’d love to know what you think of this article!

Or if you’re interested in learning more about systems thinking, see the Read More section below for links 👇

Read more

Here’s a selection of resources I’ve been using.

- Start here: 👉 WTF is systems thinking by Simon Copsey

- Systems Thinking for Happy Staff and Elated Customers by Simon Copsey (youtube video of a webinar)

- Current Reality Trees to Lift the Fog of War by Simon Copsey

- Thinking in Systems by Donella H. Meadows

- Using a current reality tree by Optimal Service Management

- Theory of constraints

- Goldratt’s Theory of Constraints: A Systems Approach to Continuous Improvement by William H. Dettmer

- Thinking for a Change: Putting the TOC Thinking Processes to Use by Lisa J. Scheinkopf